Stream processing is a computer programming paradigm, equivalent to dataflow programming, event stream processing, and reactive programming,[1] that allows some applications to more easily exploit a limited form of parallel processing. Such applications can use multiple computational units, such as the floating point unit on a graphics processing unit or field-programmable gate arrays (FPGAs),[2] without explicitly managing allocation, synchronization, or communication among those units.

The stream processing paradigm simplifies parallel software and hardware by restricting the parallel computation that can be performed. Given a sequence of data (a stream), a series of operations (kernel functions) is applied to each element in the stream. Kernel functions are usually pipelined, and optimal local on-chip memory reuse is attempted, in order to minimize the loss in bandwidth, accredited to external memory interaction. Uniform streaming, where one kernel function is applied to all elements in the stream, is typical. Since the kernel and stream abstractions expose data dependencies, compiler tools can fully automate and optimize on-chip management tasks. Stream processing hardware can use scoreboarding, for example, to initiate a direct memory access (DMA) when dependencies become known. The elimination of manual DMA management reduces software complexity, and an associated elimination for hardware cached I/O, reduces the data area expanse that has to be involved with service by specialized computational units such as arithmetic logic units.

During the 1980s stream processing was explored within dataflow programming. An example is the language SISAL (Streams and Iteration in a Single Assignment Language).

- 2Comparison to prior parallel paradigms

- 3Research

Applications[edit]

Stream processing is essentially a compromise, driven by a a loop internally. This allows throughput to scale with chip complexity, easily utilizing hundreds of ALUs.[3][4] The elimination of complex data patterns makes much of this extra power available.

While stream processing is a branch of SIMD/MIMD processing, they must not be confused. Although SIMD implementations can often work in a 'streaming' manner, their performance is not comparable: the model envisions a very different usage pattern which allows far greater performance by itself.

It has been noted that when applied on generic processors such as standard CPU, only a 1.5x speedup can be reached.[5] By contrast, ad-hoc stream processors easily reach over 10x performance, mainly attributed to the more efficient memory access and higher levels of parallel processing.[6]

Although there are various degrees of flexibility allowed by the model, stream processors usually impose some limitations on the kernel or stream size. For example, consumer hardware often lacks the ability to perform high-precision math, lacks complex indirection chains or presents lower limits on the number of instructions which can be executed.

Research[edit]

Stanford University stream processing projects included the Stanford Real-Time Programmable Shading Project started in 1999.[7]A prototype called Imagine was developed in 2002.[8]A project called Merrimac ran until about 2004.[9]AT&T also researched stream-enhanced processors as graphics processing units rapidly evolved in both speed and functionality.[1] Since these early days, dozens of stream processing languages have been developed, as well as specialized hardware.

Programming model notes[edit]

The most immediate challenge in the realm of parallel processing does not lie as much in the type of hardware architecture used, but in how easy it will be to program the system in question in a real-world environment with acceptable performance. Machines like Imagine use a straightforward single-threaded model with automated dependencies, memory allocation and DMA scheduling. This in itself is a result of the research at MIT and Stanford in finding an optimal layering of tasks between programmer, tools and hardware. Programmers beat tools in mapping algorithms to parallel hardware, and tools beat programmers in figuring out smartest memory allocation schemes, etc. Of particular concern are MIMD designs such as Cell, for which the programmer needs to deal with application partitioning across multiple cores and deal with process synchronization and load balancing. Efficient multi-core programming tools are severely lacking today.

A drawback of SIMD programming was the issue of Array-of-Structures (AoS) and Structure-of-Arrays (SoA). Programmers often wanted to build data structures with a 'real' meaning, for example:

What happened is that those structures were then assembled in arrays to keep things nicely organized. This is array of structures (AoS).When the structure is laid out in memory, the compiler will produce interleaved data, in the sense that all the structures will be contiguous but there will be a constant offset between, say, the 'size' attribute of a structure instance and the same element of the following instance. The offset depends on the structure definition (and possibly other things not considered here such as compiler's policies).There are also other problems. For example, the three position variables cannot be SIMD-ized that way, because it's not sure they will be allocated in continuous memory space. To make sure SIMD operations can work on them, they shall be grouped in a 'packed memory location' or at least in an array.Another problem lies in both 'color' and 'xyz' to be defined in three-component vector quantities. SIMD processors usually have support for 4-component operations only (with some exceptions however).

These kinds of problems and limitations made SIMD acceleration on standard CPUs quite nasty.The proposed solution, structure of arrays (SoA) follows as:

For readers not experienced with C, the '*' before each identifier means a pointer. In this case, they will be used to point to the first element of an array, which is to be allocated later. For Java programmers, this is roughly equivalent to '[]'.The drawback here is that the various attributes could be spread in memory. To make sure this does not cause cache misses, we'll have to update all the various 'reds', then all the 'greens' and 'blues'.

For stream processors, the usage of structures is encouraged. From an application point of view, all the attributes can be defined with some flexibility.Taking GPUs as reference, there is a set of attributes (at least 16) available. For each attribute, the application can state the number of components and the format of the components (but only primitive data types are supported for now). The various attributes are then attached to a memory block, possibly defining a stride between 'consecutive' elements of the same attributes, effectively allowing interleaved data.When the GPU begins the stream processing, it will gather all the various attributes in a single set of parameters (usually this looks like a structure or a 'magic global variable'), performs the operations and scatters the results to some memory area for later processing (or retrieving).

More modern stream processing frameworks provide a FIFO like interface to structure data as a literal stream. This abstraction provides a means to specify data dependencies implicitly while enabling the runtime/hardware to take full advantage of that knowledge for efficient computation. One of the simplest[citation needed] and most efficient[citation needed] stream processing modalities to date for C++, is RaftLib, which enables linking independent compute kernels together as a data flow graph using C++ stream operators. As an example:

Models of computation for stream processing[edit]

Apart from specifying streaming applications in high-level language. Models of computation (MoCs) also have been widely used such as dataflow models and process-based models.

Generic processor architecture[edit]

Historically, CPUs began implementing various tiers of memory access optimizations because of the ever-increasing performance when compared to relatively slow growing external memory bandwidth. As this gap widened, big amounts of die area were dedicated to hiding memory latencies. Since fetching information and opcodes to those few ALUs is expensive, very little die area is dedicated to actual mathematical machinery (as a rough estimation, consider it to be less than 10%).

A similar architecture exists on stream processors but thanks to the new programming model, the amount of transistors dedicated to management is actually very little.

Beginning from a whole system point of view, stream processors usually exist in a controlled environment. GPUs do exist on an add-in board (this seems to also apply to Imagine). CPUs do the dirty job of managing system resources, running applications and such.

The stream processor is usually equipped with a fast, efficient, proprietary memory bus (crossbar switches are now common, multi-buses have been employed in the past). The exact amount of memory lanes is dependent on the market range. As this is written, there are still 64-bit wide interconnections around (entry-level). Most mid-range models use a fast 128-bit crossbar switch matrix (4 or 2 segments), while high-end models deploy huge amounts of memory (actually up to 512 MB) with a slightly slower crossbar that is 256 bits wide. By contrast, standard processors from Intel Pentium to some Athlon 64 have only a single 64-bit wide data bus.

Memory access patterns are much more predictable. While arrays do exist, their dimension is fixed at kernel invocation. The thing which most closely matches a multiple pointer indirection is an indirection chain, which is however guaranteed to finally read or write from a specific memory area (inside a stream).

Because of the SIMD nature of the stream processor's execution units (ALUs clusters), read/write operations are expected to happen in bulk, so memories are optimized for high bandwidth rather than low latency (this is a difference from Rambus and DDR SDRAM, for example). This also allows for efficient memory bus negotiations.

Most (90%) of a stream processor's work is done on-chip, requiring only 1% of the global data to be stored to memory. This is where knowing the kernel temporaries and dependencies pays.

Internally, a stream processor features some clever communication and management circuits but what's interesting is the Stream Register File (SRF). This is conceptually a large cache in which stream data is stored to be transferred to external memory in bulks. As a cache-like software-controlled structure to the various ALUs, the SRF is shared between all the various ALU clusters. The key concept and innovation here done with Stanford's Imagine chip is that the compiler is able to automate and allocate memory in an optimal way, fully transparent to the programmer. The dependencies between kernel functions and data is known through the programming model which enables the compiler to perform flow analysis and optimally pack the SRFs. Commonly, this cache and DMA management can take up the majority of a project's schedule, something the stream processor (or at least Imagine) totally automates. Tests done at Stanford showed that the compiler did an as well or better job at scheduling memory than if you hand tuned the thing with much effort.

There is proof; there can be a lot of clusters because inter-cluster communication is assumed to be rare. Internally however, each cluster can efficiently exploit a much lower amount of ALUs because intra-cluster communication is common and thus needs to be highly efficient.

To keep those ALUs fetched with data, each ALU is equipped with local register files (LRFs), which are basically its usable registers.

This three-tiered data access pattern, makes it easy to keep temporary data away from slow memories, thus making the silicon implementation highly efficient and power-saving.

Hardware-in-the-loop issues[edit]

Although an order of magnitude speedup can be reasonably expected (even from mainstream GPUs when computing in a streaming manner), not all applications benefit from this.Communication latencies are actually the biggest problem. Although PCI Express improved this with full-duplex communications, getting a GPU (and possibly a generic stream processor) to work will possibly take long amounts of time. This means it's usually counter-productive to use them for small datasets. Because changing the kernel is a rather expensive operation the stream architecture also incurs penalties for small streams, a behaviour referred to as the short stream effect.

Pipelining is a very widespread and heavily used practice on stream processors, with GPUs featuring pipelines exceeding 200 stages. The cost for switching settings is dependent on the setting being modified but it is now considered to always be expensive. To avoid those problems at various levels of the pipeline, many techniques have been deployed such as 'über shaders' and 'texture atlases'. Those techniques are game-oriented because of the nature of GPUs, but the concepts are interesting for generic stream processing as well.

Examples[edit]

- The Blitter in the Commodore Amiga is an early (circa 1985) graphics processor capable of combining three source streams of 16 component bit vectors in 256 ways to produce an output stream consisting of 16 component bit vectors. Total input stream bandwidth is up to 42 million bits per second. Output stream bandwidth is up to 28 million bits per second.

- Imagine,[10] headed by Professor William Dally of Stanford University, is a flexible architecture intended to be both fast and energy efficient. The project, originally conceived in 1996, included architecture, software tools, a VLSI implementation and a development board, was funded by DARPA, Intel and Texas Instruments.

- Another Stanford project, called Merrimac,[11] is aimed at developing a stream-based supercomputer. Merrimac intends to use a stream architecture and advanced interconnection networks to provide more performance per unit cost than cluster-based scientific computers built from the same technology.

- The Storm-1 family from Stream Processors, Inc, a commercial spin-off of Stanford's Imagine project, was announced during a feature presentation at ISSCC 2007. The family contains four members ranging from 30 GOPS to 220 16-bit GOPS (billions of operations per second), all fabricated at TSMC in a 130 nanometer process. The devices target the high end of the DSP market including video conferencing, multifunction printers and digital video surveillance equipment.

- GPUs are widespread, consumer-grade stream processors[2] designed mainly by AMD and Nvidia. Various generations to be noted from a stream processing point of view:

- Pre-R2xx/NV2x: no explicit support for stream processing. Kernel operations were hidden in the API and provided too little flexibility for general use.

- R2xx/NV2x: kernel stream operations became explicitly under the programmer's control but only for vertex processing (fragments were still using old paradigms). No branching support severely hampered flexibility but some types of algorithms could be run (notably, low-precision fluid simulation).

- R3xx/NV4x: flexible branching support although some limitations still exist on the number of operations to be executed and strict recursion depth, as well as array manipulation.

- R8xx: Supports append/consume buffers and atomic operations. This generation is the state of the art.

- AMD FireStream brand name for product line targeting HPC

- Nvidia Tesla brand name for product line targeting HPC

- The Cell processor from STI, an alliance of Sony Computer Entertainment, Toshiba Corporation, and IBM, is a hardware architecture that can function like a stream processor with appropriate software support. It consists of a controlling processor, the PPE (Power Processing Element, an IBM PowerPC) and a set of SIMD coprocessors, called SPEs (Synergistic Processing Elements), each with independent program counters and instruction memory, in effect a MIMD machine. In the native programming model all DMA and program scheduling is left up to the programmer. The hardware provides a fast ring bus among the processors for local communication. Because the local memory for instructions and data is limited the only programs that can exploit this architecture effectively either require a tiny memory footprint or adhere to a stream programming model. With a suitable algorithm the performance of the Cell can rival that of pure stream processors, however this nearly always requires a complete redesign of algorithms and software.

Stream programming libraries and languages[edit]

Most programming languages for stream processors start with Java, C or C++ and add extensions which provide specific instructions to allow application developers to tag kernels and/or streams. This also applies to most shading languages, which can be considered stream programming languages to a certain degree.

Non-commercial examples of stream programming languages include:

- Ateji PXFree Edition, enables a simple expression of stream programming, the Actor model, and the MapReduce algorithm on JVM

- Auto-Pipe, from the Stream Based Supercomputing Lab at Washington University in St. Louis, an application development environment for streaming applications that allows authoring of applications for heterogeneous systems (CPU, GPGPU, FPGA). Applications can be developed in any combination of C, C++, and Java for the CPU. Verilog or VHDL for FPGAs. Cuda is currently used for Nvidia GPGPUs. Auto-Pipe also handles coordination of TCP connections between multiple machines.

- ACOTES Programming Model: language from Polytechnic University of Catalonia based on OpenMP

- BeepBeep, a simple and lightweight Java-based event stream processing library from the Formal Computer Science Lab at Université du Québec à Chicoutimi

- Brook language from Stanford

- CAL Actor Language: a high-level programming language for writing (dataflow) actors, which are stateful operators that transform input streams of data objects (tokens) into output streams.

- Cal2Many a code generation framework from Halmstad University, Sweden. It takes CAL code as input and generates different target specific languages including sequential C, Chisel, parallel C targeting Epiphany architecture, ajava & astruct targeting Ambric architecture, etc.

- DUP language from Technical University of Munich and University of Denver

- HSTREAM: a Directive-Based Language Extension for Heterogeneous Stream Computing[12]

- RaftLib - open source C++ stream processing template library originally from the Stream Based Supercomputing Lab at Washington University in St. Louis

- SPar - C++ domain-specific language for expressing stream parallelism from the Application Modelling Group (GMAP) at Pontifical Catholic University of Rio Grande do Sul

- Sh library from the University of Waterloo

- Shallows, an open source project

- S-Net coordination language from the University of Hertfordshire, which provides separation of coordination and algorithmic programming

- StreamIt from MIT

- Siddhi from WSO2

- WaveScript Functional stream processing, also from MIT.

- Functional reactive programming could be considered stream processing in a broad sense.

Commercial implementations are either general purpose or tied to specific hardware by a vendor. Examples of general purpose languages include:

- AccelerEyes' Jacket, a commercialization of a GPU engine for MATLAB

- Ateji PX Java extension that enables a simple expression of stream programming, the Actor model, and the MapReduce algorithm

- Embiot, a lightweight embedded streaming analytics agent from Telchemy

- Floodgate, a stream processor provided with the Gamebryo game engine for PlayStation 3, Xbox360, Wii, and PC

- OpenHMPP, a 'directive' vision of Many-Core programming

- PeakStream,[13] a spinout of the Brook project (acquired by Google in June 2007)

- RapidMind, a commercialization of Sh (acquired by Intel in August 2009)

- TStreams,[14][15] Hewlett-Packard Cambridge Research Lab

Vendor-specific languages include:

- Brook+ (AMD hardware optimized implementation of Brook) from AMD/ATI

- CUDA (Compute Unified Device Architecture) from Nvidia

- Intel Ct - C for Throughput Computing

- StreamC from Stream Processors, Inc, a commercialization of the Imagine work at Stanford

Event-Based Processing

- Apama - a combined Complex Event and Stream Processing Engine by Software AG

- WSO2 Stream Processor by WSO2

Batch File-Based Processing (emulates some of actual stream processing, but much lower performance in general[clarification needed][citation needed])

Continuous Operator Stream Processing[clarification needed]

Stream Processing Services:

- IBM Streams

See also[edit]

References[edit]

- ^A SHORT INTRO TO STREAM PROCESSING

- ^FCUDA: Enabling Efficient Compilation of CUDA Kernels onto FPGAs

- ^IEEE Journal of Solid-State Circuits:'A Programmable 512 GOPS Stream Processor for Signal, Image, and Video Processing', Stanford University and Stream Processors, Inc.

- ^Khailany, Dally, Rixner, Kapasi, Owens and Towles: 'Exploring VLSI Scalability of Stream Processors', Stanford and Rice University.

- ^Gummaraju and Rosenblum, 'Stream processing in General-Purpose Processors', Stanford University.

- ^Kapasi, Dally, Rixner, Khailany, Owens, Ahn and Mattson, 'Programmable Stream Processors', Universities of Stanford, Rice, California (Davis) and Reservoir Labs.

- ^Eric Chan. 'Stanford Real-Time Programmable Shading Project'. Research group web site. Retrieved March 9, 2017.

- ^'The Imagine - Image and Signal Processor'. Group web site. Retrieved March 9, 2017.

- ^'Merrimac - Stanford Streaming Supercomputer Project'. Group web site. Archived from the original on December 18, 2013. Retrieved March 9, 2017.

- ^Imagine

- ^Merrimac

- ^Memeti, Suejb; Pllana, Sabri (October 2018). HSTREAM: A Directive-Based Language Extension for Heterogeneous Stream Computing. IEEE. doi:10.1109/CSE.2018.00026. Retrieved 30 December 2018.

- ^PeakStream unveils multicore and CPU/GPU programming solution

- ^TStreams: A Model of Parallel Computation (Technical report).

- ^TStreams: How to Write a Parallel Program (Technical report).

External links[edit]

- Press Release Launch information for AMD's dedicated R580 GPU-based Stream Processing unit for enterprise solutions.

- Benchmark for streaming computation engines.[1]

- ^Chintapalli, Sanket; Dagit, Derek; Evans, Bobby; Farivar, Reza; Graves, Thomas; Holderbaugh, Mark; Liu, Zhuo; Nusbaum, Kyle; Patil, Kishorkumar; Peng, Boyang Jerry; Poulosky, Paul (May 2016). 'Benchmarking Streaming Computation Engines: Storm, Flink and Spark Streaming'(PDF). 2016 IEEE International Parallel and Distributed Processing Symposium Workshops (IPDPSW). IEEE. pp. 1789–1792. doi:10.1109/IPDPSW.2016.138. ISBN978-1-5090-3682-0.

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Stream_processing&oldid=903661119'

Is it possible to configure multiple database servers (all hosting the same database) to execute a single query simultaneously?

I'm not asking about executing queries using multiple CPUs simultaneously - I know this it possible.

UPDATE

What I mean is something like this:

- There are two 2 servers: Server1 and Server2

- Both server host database Foo and both instances of Foo are identical

- I connect to Server1 and submit a complicated (lots of joins, many calculations) query

- Server1 decides that some calculations should be made on Server2 and some data should be read from that server, too - appropriate parts of the query are sent to Server2

- Both servers read data and perform necessary calculations

- Finally, results from Server1 and Server2 are merged and returned to the client

All this should happen automatically, without need to explicitly reference Server1 or Server2. I mean such parallel query execution - is it possible?

UPDATE 2

Thanks for the tips, John and wuputah.

I am researching alternatives of increasing both availability and capacity of MOSS database backend. So what I'm looking for is some kind out-of-the-box SQL Server load balancing solution that would be transparent to the application, because I cannot modify the application in any way. I guess SQL Server has no such feature (and Oracle, as far as I understand it, does - it is RAC mentioned by wuputah).

UPDATE 3

A quote from the Top Tips for SQL Server Clustering article:

Let's start by debunking a common misconception. You use MSCS clustering for high availability, not for load balancing. Also, SQL Server does not have any built-in, automatic load-balancing capability. You have to load balance through your application's physical design.

Marek Grzenkowicz

Marek GrzenkowiczMarek Grzenkowicz14.3k66 gold badges6969 silver badges9494 bronze badges

3 Answers

What you're really talking about is a clustering solution. It looks like SQL Server and Oracle have solutions to this, but I don't know anything about them. I can guess they would be very costly to buy and implement.

Possible alternate suggestions would be as follows:

- Use master-slave replication, and do your complex read queries from the slave. All writes must go to the master, which are then sent to the slave, so things stay in sync. This helps things go faster because the slave only has to worry about the writes coming from the master, which are already predetermined on behalf of the slave (no deadlocks etc). If you're looking to utilize multiple servers, this is the first place I would start.

- Use master-master replication. This means that all writes from both servers go to each other, so they stay in sync (at least theoretically). This has some of the benefits as master-slave but you don't have to worry about writes going to one server instead of the other. The more common use of master-master replication is for failover support; master-slave is really better suited to performance.

- Use the feature John Sansom talked about. I don't know much about it, but it seems its basis is splitting your database into tables on different servers, which will have some benefits as well as drawbacks. The big issue is that since the two systems can't share memory, they will have to share a lot of data over the network to compute complex joins.

Hope this helps!

RE Update 1:

If you can't modify the application, there is hope, but it might be a bit complicated. If you were to set up master-slave replication, you can then set up a proxy to send read queries to the slave(s) and write queries to the master(s). I've seen this done with MySQL, but not SQLServer. That's a bit of a problem unless you want to write the proxy yourself.

This has been discussed on SO previously, so you can find more information there.

RE Update 2:

Microsoft's clustering might not be designed for performance, but that's Microsoft fault. That's still the level of complexity you're talking about here. If they say it won't help, then your options are limited to those above and by what you do with your application (like sharding, splitting into multiple databases, etc).

Community♦

wuputahwuputah10.4k11 gold badge3434 silver badges5858 bronze badges

Yes I believe it is possible, well sort of, let me explain.

You need to look into and research the use of Distributed Queries. A distributed query runs across multiple servers and is typically used to reference data that is not stored locally.

For example, Server A may hold my Customers table and Server B holds my Orders table. It is possible using distributed queries to run a query that references both Server A and Server B, with each server managing the processing of its local data (which could incorporate the use of parallelism).

Now in theory you could store the exact same data on each server and design your queries specifically so that only certain table were referenced on certain servers, thereby distributing the query load. This is not true parallel processing however, in terms of CPU.

If your intended goal is to distribute the processing load of your application then the typical approach with SQL Server is to use Replication to distribute data processing across multiple servers. This method is also not to be confused with parallel processing.

I hope this helps but of course please feel free to pose any questions you may have.

John SansomJohn Sansom36.3k88 gold badges6464 silver badges7979 bronze badges

Interesting question, but I'm struggling to get my head around this being beneficial for a multi-user system.

If I'm the only user having half my query done on Server1 and the other half on Server2 sounds cool :)

If there are two concurrent users (lets say with queries of identical difficulty) then I'm struggling to see that this helps :(

I could have identical data on both servers and load balancing - so I get Server1, my mate gets Server2 - or I could have half the data on Server1 and the other half on Server2, and each will be optimised, and cache, just their own data - spreading the load. But whenever you have to do a merge to complete a query the limiting factor becomes the pipe-size between them.

Which is basically Federated Database Servers. Instead of having all my Customers on one server and all my Orders on the other I could, say, have my USA customers and their orders on one, and my European customers/orders on the other, and only if my query spans both is there any need for a merge step.

KristenKristen3,24522 gold badges2222 silver badges3030 bronze badges

Not the answer you're looking for? Browse other questions tagged sql-serverdatabase or ask your own question.

Skip to end of metadataGo to start of metadataApplies to:

This document applies to SAP ECC 6.0, SAP Netweaver 2004s, SAP BANKING: Deposit Management.

Application Engine Parallel Processing Center

Summary

The document briefly explains how parallel processing can be implemented in SAP BANKING - DM using standard interface. One of the most important elements of effectively implementing a parallel processing is DIVIDE AND CONQUER rule and concurrent execution of similar threads implementing the same logic.

Author(s): Rahul Babukuttan

Created on: 13 November 2014

Author Bio

Rahul Babukuttan having around 4.5 years of SAP experience.

SAP Certified ABAP Consultant. Completed M-Tech in Networking Technologies

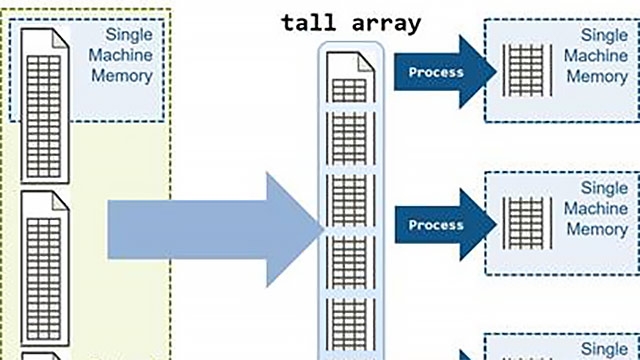

A SAP parent program would automatically divide the workload into smaller packages based on packet size which can be predefined or calculated dynamically, then start a number of child processes to process those packages concurrently, each child process would process one package at one time, the program would keep engaging child process until all packages are processed. Child process engaged in parallel processing can be SAP dialog or background work process in a SAP system.

We all know that there are different types of work processes in SAP. All those details are out of the scope of the document. Please see the reference section for more details

I am taking a real life scenario to make the readers understand the key factors to divide the work load. Assume I have taken some 30 members in a team randomly. It will include all the people with different levels of total years of IT experience ranging from 0 to 12. These 30 members are going to attend a walk in drive. Assume that there are 3 interviewers over there.

The drive coordinator has decided to make the process as PP. So he has taken a decision to divide the group of people based on the total work experience. He has 3 slots as below.

Slot 1: Exp from 0 – 4 - Total members falling in this range is 12

Slot 2: Exp from 5 – 8 - Total members falling in this range is 13

Slot 3: Exp from 9 – 12 - Total members falling in this range is 5

Further these 3 slots will be executed parallel

Interviewers – available work process / threads configured for the job(3)

Total no of records – 30

Range of experience – Key factor to divide work load (0 to 12) - In technical terms we use contract ids, Business partners and pass in the OBJECT KEY field in the WORK LIST OBJECT internal table.

Slots – Interval distribution

Total members falling in the range – No of due objects (30)

Work list objects - No of records processed by each Work processer ( Number of members in each slot) - Refer Step 7 in technical implementations.

In the above example since the coordinator decided a predefined slots based on the total it experience, technically the package def category value is (3). There are advantages and Dis advantages

Advantages : No need to populate the intervals. The PP frame work will take care of the case.

Disadvantages: Unequal Load distribution. If we cross examine the slots, Slot 3 is very lightly loaded and slot 2 is Heavily Loaded. So what is the next step to avoid this disadvantage? Make the Package def category as 1 and populate our own intervals so that the load distribution will be equal. More technical details are explained in the below section.

Technically speaking, we use the key field from the table or combination of key fields in the table to divide the work load. In the interface we refer to as work list object. In the Interface ‘IF_AM_PP_PACKAGE’, we have the parameter E_TAB_OBJKEY_WLO in the method LOAD_WITH_RANGE/ LOAD_WITH_TAB. For example we can use Contract_int in banking tables or if we are not accessing it we can use business partner. Further we can also use the combination of key fields, then concatenate it and populate in the object key so that the work load is divided based on that.

The parallel processing frame work will only look based on the field object key in the parameter E_TAB_OBJKEY_WLO. Further based on the selection screen parameter ie dynamic with package size or Non dynamic with no of packages, all the object key is divided into equal number of packages. This depends on the intervals from the DB. In the further section of the document, we describe this more details.

There are cases where, programmer can define the interval range and can skip some intervals explicitly. If we are using the package definition category as 1, the method GET_PACK_INTERVALS will get triggered.

Example: I have a scenario to process 30k records from the table BCA_CONTRACT table. The program will be scheduled in production every day to process 30k records each day. The last contract id processed will be stored in a custom table.

In this case if we use the normal scenario, it will affect the throughput.

How? Answer: If I have processed say contract id 555555 in my prev run and in the next day run say the first thread creation will be in the range 0000000 to 555556, then the first interval get wasted and the work load will not get distributed equally.

Remedy: To increase the through put, we use the package definition category as 1 and maintain our own interval. We will start the interval from 555556 instead of starting it from 000000.

How to Implement this? Answer: We have a method GET_PACK_INTERVALS, here we will create our own intervals, lower limit interval and upper limit interval and append in the internal table E_TAB_OBJ_INTERVALS

In the mentioned Interface ‘IF_AM_PP_PACKAGE’, we can make the following things in parallel

- Selection Logic from DB tables - Refer Step 7 in technical implementations.

- Processing logic after selection - Refer Step 8 in technical implementations.

This is number of SAP work processes which can be engaged at the same time to handle workload. Higher degree means more SAP work processes for parallel processing – so more package can be processed at the same time, so the workload can be processed faster. Usually the number of SAP work processes assigned to an application type associated with a program or a job will be constant and will be configured initially.

There is a standard SAP PP-Tool-Framework Interface ‘IF_AM_PP_PACKAGE’. Below are the methods provided in the interface which needs to be implemented. Among them selected methods are explained briefly.

- CHECKS_AT_SELECTION_SCREEN

- ADJUST_APPLICATION_DATA

- ADJUST_LOG_PROFILE

- SET_APPLICATION_DATA

- GET_PACKAGE_DEFINITION

- GET_PACK_INTERVALS

- GET_PACK_OBJECTS

- GET_NO_OF_DUE_PACK_OBJECTS

- CREATE_INDIVIDUAL_PACKAGES

- DELETE_INDIVIDUAL_PACKAGES

- PROCESS_BEGIN_OF_RUN

- GET_COUNTER_DEFINITIONS

- PROCESS_BEGIN_OF_JOB

- PROCESS_END_OF_JOB

- PROCESS_END_OF_RUN

- PROCESS_BEGIN_OF_PACKAGE

- LOAD_DATA_WITH_RANGE

- LOAD_DATA_WITH_TAB

- LOAD_DATA_WITH_TAB

- LOCK_DATA

- UNLOCK_DATA

- SYNC_APPL_BUFFER

- PROCESS_DATA

- PROCESS_DATA_SINGLE

- ENRICH_COUNTER_TAB

- INIT_BUFFER_FOR_REPEAT

- GET_DESCR_FOR_OBJECT_LIST

- GET_DESCR_FOR_OBJECT

- ENRICH_PPO_CONTEXT

- Define the Application type, Application, Application parameter type normally BCA_STR_AM_BASIS_PP_PARAM (standard structure), Object and sub object. This is done from the transaction BANK_CUS_PPC.

- Also define the Implementation Methods and the standard Function modules after defining the step1.

- 0100 BCA_AM_BASIS_PP_CB_0100

- 0140 BCA_AM_BASIS_PP_CB_0140

- 0160 BCA_AM_BASIS_PP_CB_0160

- 0205 BCA_AM_BASIS_PP_CB_0205

- 0300 BCA_AM_BASIS_PP_CB_0300

- 1000 BCA_AM_BASIS_PP_CB_1000

- 1100 BCA_AM_BASIS_PP_CB_1100

- 1200 BCA_AM_BASIS_PP_CB_1200

- 1260 BCA_AM_BASIS_PP_CB_1260

- 1270 BCA_AM_BASIS_PP_CB_1270

- 1300 BCA_AM_BASIS_PP_CB_1300

- 1350 BCA_AM_BASIS_PP_CB_1350

- 1400 BCA_AM_BASIS_PP_CB_1400

- 1410 BCA_AM_BASIS_PP_CB_1410

3. Define the no: of tasks for the application type in the below path

4. SPRO->SAP CIMG->FS-> FOUNDATION-> Parallel processing and Job control -> Maintain Job distribution ->Enter Application type and No of tasks

5. Maintain Customer settings for Application types for setting the number of repeats in the below path

SPRO->SAP CIMG-> Cross Application Components -> General Application functions-> Parallel processing and Job Control -> Parallel Processing -> Maintain Customer settings for Application types

Is there an option for the pp frame work to know about the value of selection screen parameters? The answer is YES. This can be achieved by the below steps.

- Create a parent program. Declare the application type and Custom Class Name (say ZCL_PP) inside the parent program. [Purpose: To make use of the interface methods IF_AM_PP_PACKAGE we do create a custom class].

- Call the method CL_AM_PP_JOB_CONTROL=>MAPI_PP_PROCESS in the start of selection. Clash of lords 2 best heroes. Please note that to make better control of the program, declare the parameters mentioned in the method ( eg, Application category, structure for parameters, No of packages, Package size, Dynamic flag and log type).

- In the interface section of the class ZCL_PP, give the name as IF_AM_PP_PACKAGE.

- SET_APPLICATION_DATA: Declare static variable to store the selection screen parameter to make it public to all other methods. This can be done in the method. The parameter of the method I_STR_GEN_PARAM stores all the selection screen values. This needs to be moved to the static variable declared for future use.

- GET_NO_OF_DUE_PACK_OBJECTS: This method is only be needed if user has selected Dynamic package determination in the selection screen and package definition category set as '3'. Hence we need to accurately populate the number of entries to be processed. Also note that while populating the no of entries, make sure to delete duplicate entries. The no of entries is to be populated in the parameter E_NO_OF_OBJECTS. If the dynamic package determination is not populated in the selection screen, we can skip implementing the method.

- GET_PACKAGE_DEFINITION: The value populated in this method determines which method to trigger. The value for the parameter C_PACKDEFCATG can be 1, 2 or 3.

- If the package definition category is 1, its developer’s responsibilityto populate the intervals. The splitting of intervals should be done inside the method GET_PACK_INTERVALS. Please note that if the package definition category is other than 1, this method will not be triggered. The intervals can be created by using the FM BCA_AR_PP_TO_GUID_INTV_CREA. Populate the lower and upper limit and append to the itab parameter E_TAB_OBJ_INTERVALS

- If the package definition category is 3, the intervals will be picked up automatically based on the package size / dynamically. To be specific, I haven’t find the exact db table storing this J. These intervals are predefined.

- If Package definition category is 2 : This will trigger the method GET_PACK_OBJECTS. Explore further details

7. LOAD_DATA_WITH_RANGE: This is the method that we can write the select query. This method will run in parallel threads depending on your package size. Optimal method to write the select query as well. If you have a look into the parameters we have I_OBJNO_FROM and I_OBJNO_TO. At run time these parameters stores the lower limit and upper limit of intervals. For example inside this method we can write a select query like this

SELECT CONTRACT_INT

FROM BCA_CONTRACT

INTO TABLE itab

WHERE contract_int >= I_OBJNO_FROM

AND contract_int <= I_OBJNO_TO.

Also in this method don’t forget to populate the work list object in the parameter E_TAB_OBJKEY_WLO.

LOOP AT itab INTO wa.

Move corresponding fields of wa to wa_objlist

Append wa_objlist to E_TAB_OBJKEY_WLO.

Application Engine Parallel Processing Center

ENDLOOP.

8. PROCESS_DATA: This method also runs in parallel threads. Once the LOAD_DATA_WITH RANGE is finished, this method will be triggered. Here we will write the logic for processing of data. Each entry populated in the table E_TAB_OBJKEY_WLO gets copied automatically (post auto deletion of duplicate entries) to the parameter C_TAS_OBJKEY_STATUS. We can call this method as Mass processing of data. Normally if we need to change some entries using BAPI’s we write the logic inside this method

9. PROCESS_END_OF_JOB: This method also runs in parallel threads. This method will be triggered immediately before the death of each parallel thread.

10. PROCESS_END_OF_RUN: This method is run in single thread and executed only once. Once all the parallel threads are finished, this method will get triggered. Normally, here we write the output final results to the file

11. ENRICH_PPO_CONTEXT: We can pass the PPO errors inside the method.

Assume the interface in discussion process based on contract id is of type hexadecimal and length 16 and my No of packages is 5(Fixed package). The interval distribution will be picked up as defined in the following table

Interval 1 - 0000000000000000 33333332FFFFFFFF

Interval 2 - 3333333300000000 66666665FFFFFFFF

Interval 3 - 6666666600000000 99999998FFFFFFFF

Interval 4 - 9999999900000000 CCCCCCCBFFFFFFFF

Interval 5 - CCCCCCCC00000000 FFFFFFFFFFFFFFFF

Here each interval is processed by each thread in Parallel. The object keys falling in the interval will get processed in each thread. Since there will not be any interdependencies between each thread as each thread will process different contract ids.

Standard tcodes related to FPP objects

- 1. FPPOBJ – Maintain Intervals

- 2. FPP